The Complete LookML Guide for 2026: Core Concepts and the dbt Migration Decision

A practitioner's guide to LookML in 2026: what views, dimensions, measures, and explores actually do, how LookML compares to the dbt Semantic Layer, and how to make the migration decision for enterprise teams.

Talk to an expertThis week, Google officially separated Looker from Data Studio. For those of us who have spent years implementing LookML for enterprise clients, the announcement felt like a long-overdue correction. Looker is now explicitly the enterprise semantic layer platform. Not the dashboarding tool. Not the free exploration product. This LookML guide is for the teams that decision directly affects: companies that are invested in Looker and considering doubling down, and companies sitting on a mature LookML implementation and wondering whether 2026 is the year to migrate to the dbt Semantic Layer.

Not a vendor comparison. A practitioner's view from someone who has built both.

What LookML Actually Is

LookML stands for Looker Modeling Language. It is a proprietary, dependency-based language for creating semantic data models on top of your data warehouse. You use it to describe dimensions, aggregates, calculations, and relationships in SQL — and Looker uses those descriptions to dynamically generate queries on behalf of your users.

The core concept is DRY: Don't Repeat Yourself. In LookML, you define your business logic once. Every time a user drags a field into an Explore, runs a report, or builds a dashboard, Looker generates the appropriate SQL from that single definition. Your revenue metric does not live in twelve dashboard queries with slightly different logic. It lives in one place, and every downstream query reads from it.

This is what makes Looker genuinely different from a dashboarding tool. LookML is not a visualization product. It is a semantic data layer expressed in code — the governed interface between your warehouse and every human and machine that needs to ask questions about your data.

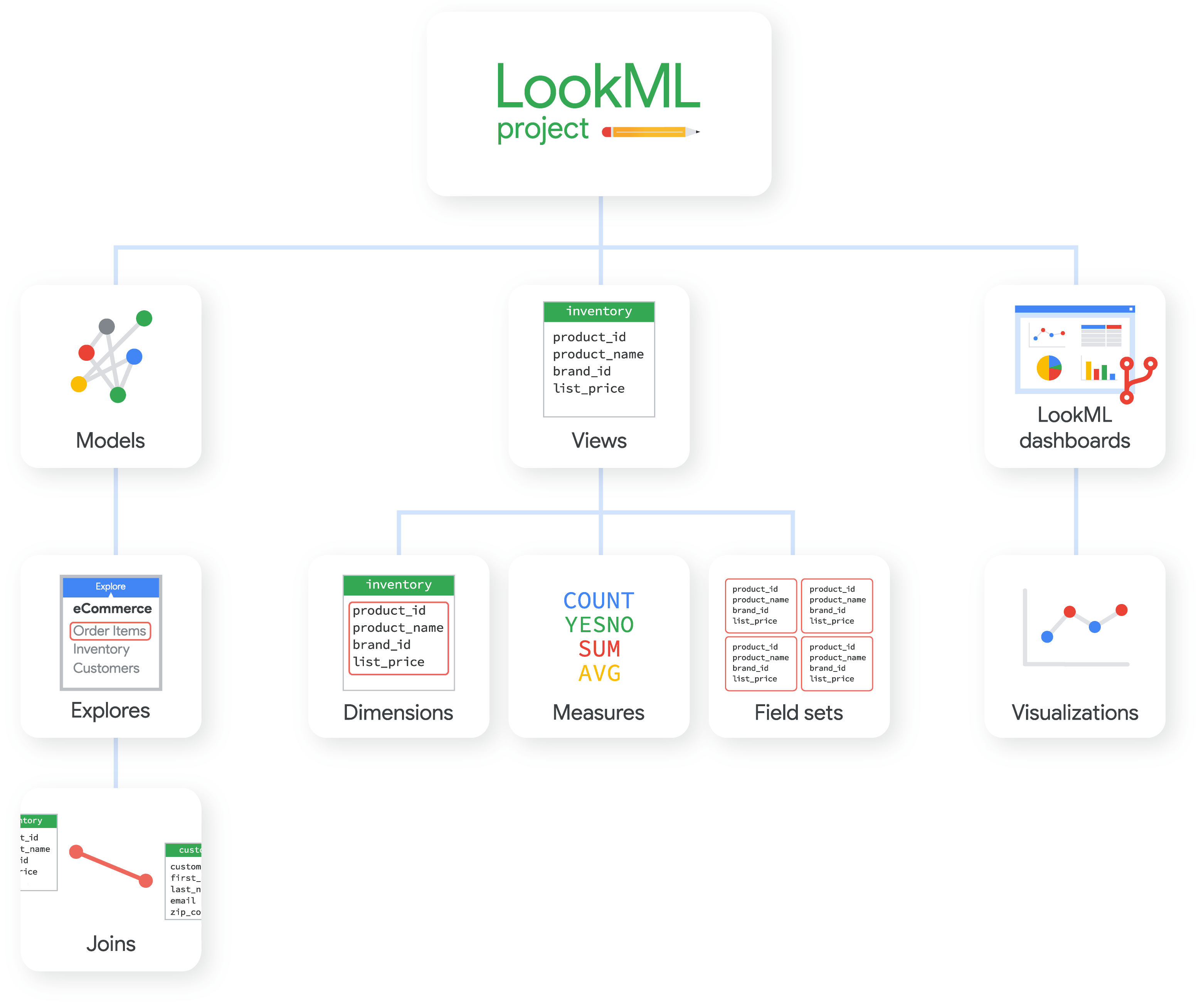

The Core LookML Building Blocks

To understand LookML's architecture, you need to understand its four primary structures. These are the concepts every LookML practitioner learns first, argues about longest, and builds their entire data modeling strategy around.

Views

A view is the foundational building block of LookML. Each view maps to a database table, a derived table, or a subquery result. Inside a view, you define how the data in that table should be named, calculated, and interpreted by the business.

Views are where you write your field-level business logic. You are not writing SQL that runs queries. You are writing metadata that describes how queries should be constructed when users interact with the Explore interface. The distinction is subtle but critical: LookML is a declarative language, not an imperative one. You describe intent; Looker handles execution.

Dimensions

Dimensions are the attributes your users slice data by. Customer country. Product category. Order status. Every column in your database that represents a descriptive quality — something you group by, filter on, or use to segment a metric — becomes a dimension in LookML.

Dimensions can be raw fields that point directly to a column, derived fields computed from SQL expressions, or dimension groups. The dimension group is one of LookML's most practically useful features: it takes a timestamp column and automatically generates a full date hierarchy — year, quarter, month, week, day, hour — without requiring the analyst to write separate field definitions for each grain. One dimension group replaces what would be six to ten separate fields in a conventional SQL data model.

Measures

Measures are the quantitative values your business actually cares about. Total revenue. Average order value. Distinct customer count. In LookML, a measure is an aggregate function applied to one or more fields — and it is defined once in the view, reused everywhere it appears across the platform.

This is where LookML earns its reputation for data governance. When the finance team and the growth team both pull revenue from a Looker Explore, they are running the same measure definition. No drift. No divergent logic built independently inside two different dashboards. The governance is baked into the model layer, not enforced through analyst convention or documentation that nobody reads.

It is worth noting one limitation: in LookML, measures cannot reference other measures. Complex derived metrics — ratios, weighted averages, metrics that compose other metrics — require derived tables or additional SQL expressions. This is one area where the dbt Semantic Layer's metric composition model is structurally cleaner.

Explores

An Explore is the interface that surfaces a group of joined views to your users. It is what business users interact with when they open Looker. An Explore defines which views are available together, how they join, and what fields are visible to the analyst.

Explores sit in model files, alongside the join logic that connects your views. When you define an Explore, you are telling Looker how to navigate your data relationships — which tables can be joined, on which keys, using which join types. LookML's join handling, including its ability to automatically resolve multi-hop join paths between tables that are not directly related, is one of its most significant practical advantages over newer semantic layer tools. Multi-hop joins — connecting three or more tables through intermediate relationships — remain an unsolved problem in dbt MetricFlow's current join model.

Derived Tables and PDTs: Where LookML Gets Complicated

Beyond the four core structures, derived tables are where LookML starts to reveal its depth and its debt.

A derived table is a view built from a SQL query rather than a physical database table. This lets you create complex transformations directly in LookML — sessionizing event streams, computing rolling averages, building intermediate aggregations — without touching your transformation layer. Persistent Derived Tables, known as PDTs, take this further by materializing the result into a temporary table in your database, triggered on a schedule or a LookML datagroup.

PDTs are powerful. They are also the primary source of technical debt in every mature LookML implementation I have worked in. When analysts reach for PDTs to solve transformation problems, they are doing data engineering inside their BI tool. The transformation logic lives in LookML rather than in dbt, which means it is harder to test, harder to document lineage for, and invisible to any system that queries your warehouse directly.

If your LookML implementation is heavy with PDTs, you are already operating a split transformation architecture. That is the first signal that a migration conversation is worth having.

LookML's Genuine Strengths in 2026

After years of implementing LookML for enterprise clients, I have a clear view of what it is actually excellent at — and it is worth saying clearly, because the "everything should move to dbt" narrative sometimes obscures genuine advantages.

The developer experience is unmatched in the semantic layer space. The LookML IDE gives you auto-complete, contextual hints, and an immediate feedback loop. When you write a new measure, you can see it appear in the Explore within seconds. You are building and testing the semantic layer in the same environment, with no build-compile-deploy cycle between intent and validation.

LookML is also exceptionally mature. The language has been in production since 2013. It has handled edge cases in enterprise schemas that newer tools are only beginning to encounter. Complex fan-out logic, symmetric aggregates, multi-hop join resolution — these are solved problems in LookML. A 2024-2026 survey of over 500 data teams found LookML with 28% adoption across enterprise BI implementations. That is not market share you accumulate without solving real problems at scale.

And for enterprises that are already Looker shops, the switching cost is real. A mature LookML model with hundreds of views, thousands of dimension and measure definitions, and multiple years of analyst-built Explores is a significant organizational asset. The model encodes institutional knowledge about how the business defines its data. That does not migrate trivially, and anyone who tells you otherwise has not done it.

The dbt Semantic Layer: What It Is and What It Is Not

The dbt Semantic Layer, powered by MetricFlow, takes a different approach to the same problem. Instead of defining business logic inside a BI tool, you define it in YAML within your dbt project. Your metrics, entities, and semantic models live alongside your dbt transformations — in the same repository, the same version control system, the same code review process your data engineers already use.

The core primitives are different from LookML. Instead of views and dimensions, you work with semantic models (which map to dbt models in your warehouse), entities (business concepts like customer, order, or product, defined globally across models), measures (aggregations defined on the semantic model), and metrics (composed business calculations built on top of measures).

The concept of entities is one of MetricFlow's genuine innovations. An entity is a global concept — it defines what a "customer" or an "order" is across all of your semantic models. This makes multi-entity queries more explicit and theoretically cleaner than LookML's Explore-scoped join definitions. In practice, MetricFlow's entity model handles most standard join patterns well, but struggles with the multi-hop scenarios where LookML still has the advantage.

The dbt Semantic Layer reached general availability in October 2024. It is production-ready. For dbt-native teams that have already invested in transformation models and want to centralize all business logic in a single, open, SQL-based workflow, it is a compelling and increasingly mature option.

The Honest Comparison

Here is the practitioner view that vendor comparison posts usually avoid.

LookML is better for teams that live inside Looker. The developer experience, the join resolution, the self-service Explore interface, the twelve-year maturity — these are genuine advantages for organizations whose primary data consumer is a Looker user. The feedback loop between model change and business user impact is tighter than anything dbt's build-deploy workflow currently offers.

The dbt Semantic Layer is better for teams that want tool-agnostic metric definitions. Because MetricFlow sits outside any specific BI tool, your metric definitions work across Tableau, Power BI, Hex, and any other tool that implements the dbt Semantic Layer API. If your organization uses multiple BI tools — or expects to change its primary BI platform within three years — centralizing metrics in dbt removes a vendor lock-in risk that compounds over time.

dbt's semantic layer also wins on openness. MetricFlow is open-source. LookML is proprietary. If Google Cloud changes Looker's pricing or positioning materially, your LookML model has no migration path. Your dbt semantic models do.

Neither wins cleanly on the sync problem. The most common pain point in mixed dbt-plus-Looker environments is keeping LookML and dbt models aligned. Business logic defined in dbt transformation models leaks into LookML PDTs. Metric definitions drift between layers. Governance breaks at the boundary between transformation and semantic modeling. Both approaches are solving this, and neither has fully eliminated the operational burden of maintaining consistency across the boundary.

The Migration Decision Framework

If you are sitting with a mature LookML implementation and evaluating whether to migrate, these are the questions that actually matter. Not which tool has more features. What fits your current infrastructure, team capabilities, and AI strategy.

Stay with LookML if: Your organization is deeply invested in the Looker platform and your analysts depend on Explores for self-service. Your LookML model is well-maintained, with certified dimensions and measures, consistent governance, and limited PDT debt. You are building on Looker's agentic capabilities — the MCP integration and Open SQL interface that Google is actively investing in. Google just confirmed Looker as their enterprise semantic layer for the agentic AI era. That roadmap alignment has real value.

Migrate to the dbt Semantic Layer if: Your team is already dbt-native and wants a single location for all business logic, from raw transformation to metric definition. You use multiple BI tools, or plan to. You have significant LookML PDT debt that is better managed as dbt models with proper testing and lineage. You want to expose metrics to AI agents via the dbt Semantic Layer API, which is already integrating with an expanding set of tools through MetricFlow's open protocol.

Do both (as a transition pattern) if: You are mid-migration, or you have complex LookML Explores with multi-hop join logic that MetricFlow cannot yet replicate. The practical pattern here: shift all transformation logic left into dbt models, keep LookML thin by pointing views at dbt models rather than raw tables, and migrate metric definitions incrementally. dbt Labs offers a dbt-metrics-converter CLI tool that handles some LookML-to-MetricFlow translation, though significant manual work remains — particularly around derived metrics, PDT logic, and Explore-specific join definitions.

What the Migration Actually Involves

The migration from LookML to the dbt Semantic Layer is not a refactoring exercise. It is an architectural shift. The work breaks into three distinct stages, and skipping any one of them creates the same problems you were trying to solve in the first place.

First, audit your LookML model. Catalog every measure, every PDT, every derived view. Separate what belongs in the transformation layer — business logic that should live in dbt models — from what belongs in the semantic layer as MetricFlow definitions. PDTs that contain transformation logic should become dbt models. Raw table views with clean dimensions and measures are candidates for MetricFlow semantic models.

Second, shift transformation logic left. Any business logic currently living in LookML PDTs needs to move into dbt. Write the SQL transformations in dbt, build data tests, document lineage. Until your dbt models support the required metric calculations, the semantic layer migration cannot proceed — you cannot build a governed semantic model on top of undefined transformation logic.

Third, build the MetricFlow semantic models. Define entities, semantic models, measures, and metrics in YAML. Connect your BI tools via the dbt Semantic Layer API or JDBC interface. Validate metric parity against your existing LookML definitions before decommissioning anything. Based on industry estimates, a full migration for a mid-sized Looker implementation runs approximately 200 hours of engineering work. That is not a reason to avoid the migration if the reasons are right. It is a reason to be deliberate about when those reasons actually exist.

LookML, dbt, and the Agentic AI Layer

In 2026, the migration decision has one variable that did not exist two years ago: agentic AI.

AI agents need governed semantic layers to function reliably on your business data. When an agent queries your data, it asks questions about metrics, dimensions, and relationships. If those definitions are consistent and machine-readable, the agent operates within your data governance framework. If they are not, it hallucinates business logic and produces answers that look correct and are wrong.

LookML's agentic path is Looker's MCP integration, which allows AI agents to query Looker's semantic model through the Open SQL interface. Google has framed this explicitly: Looker is the enterprise platform for "trusted, governed data powered by a central semantic model" in agentic use cases. The semantic layer is Layer 2 of the Intelligence Allocation Stack — the governed vocabulary that sits between your data foundation and the AI systems operating above it.

The dbt Semantic Layer's agentic path is the MetricFlow API, which exposes metric definitions to any tool that speaks the protocol. As MCP adoption grows across the AI tooling ecosystem, a MetricFlow model connected via the Semantic Layer API becomes a vendor-agnostic governed interface that any agent can query — not just agents embedded in Google's ecosystem.

Both paths lead to the same destination: a semantic layer that makes your data trustworthy for AI. The question is which path fits your existing data infrastructure and the AI tools your organization is actually deploying.

The Bottom Line

LookML is not going away. Google just doubled down on it. For organizations that are Looker-native, have invested in building a proper semantic model with governed dimensions and certified measures, and are looking to extend that investment into the agentic AI era, there is a clear and well-supported path forward.

For organizations that are dbt-native, or that are carrying significant technical debt in the form of PDT-heavy LookML implementations and unmaintained derived views, the dbt Semantic Layer migration is worth the investment — not because LookML is wrong, but because centralizing all business logic in a single, open, SQL-based workflow removes operational complexity that compounds as AI projects scale.

The semantic layer question is never which tool is best in isolation. It is where your business logic should live, who owns it, and what systems need to read from it — today and two years from now. Get that question right, and either path can support the data architecture an enterprise AI strategy actually requires.

For every dollar companies spend on AI, six should go to the data architecture underneath it. The semantic layer is where most organizations have the most to gain, and the most to lose by getting it wrong.

Deep dives on modern data engineering

Semantic layers, modern stacks, and scalable architecture — in your inbox, not in a backlog.

Unwind Data

Speak with a data expert

We've helped scale-ups and enterprises move faster on exactly this kind of work — without the trial and error. Strategy, architecture, and hands-on delivery.

Schedule a consultation