Omni's $120M Series C Puts the Semantic Layer at the Center of AI Analytics

Omni raised $120M at a $1.5B valuation today — with the semantic layer as the explicit center of their pitch and their moat. Here is why the framing matters as much as the number.

The Omni Series C is official: $120 million at a $1.5 billion valuation, led by ICONIQ with Theory Ventures, First Round Capital, Redpoint Ventures, and GV participating. ARR is up 4x over the last year.

The number is significant. What is more significant is how Colin Zima, Omni's CEO, describes what the company built.

Not a BI tool. Not an AI analytics platform. The specific phrase he uses is "the context layer." And at the center of it — architecturally, strategically, as the explicit source of the company's moat — is the semantic layer.

This is not a coincidence. It is a thesis being validated at $1.5 billion.

What Omni Actually Said

The Series C post is worth reading carefully, because the language is precise in a way that fundraising announcements usually are not. Zima does not lead with AI. He leads with trust, and then explains why trust requires a specific architecture.

The argument goes like this: natural language is the best interface for data that has ever existed. But natural language only works at scale with what he calls "calibrated context." Every company has its own definition of revenue, customer, and last quarter. That logic is tribal knowledge — in people's heads and scattered documentation. Without it, an LLM will confidently guess. And guessing does not work in analytics.

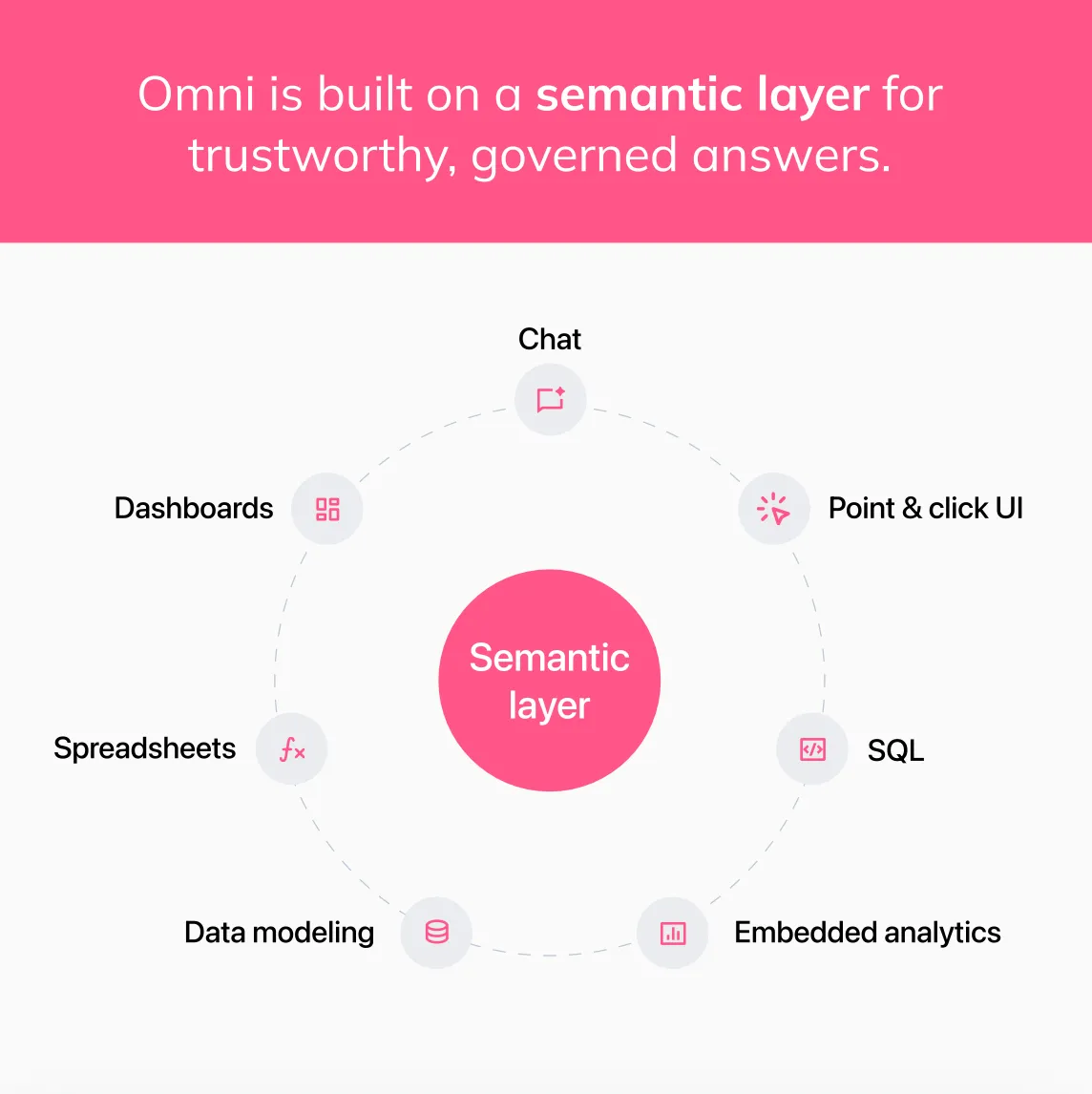

Then comes the line that matters: "We built Omni around a semantic layer because we believed this had to be architectural. One place to define metrics and encode business logic. One system to enforce permissions across every query."

And the moat statement: "The moat in AI analytics is the structured context underneath. A shared, reusable understanding of how a business operates."

That is not marketing language. That is the Intelligence Allocation Stack described by a company that has been building it from layer two upward for four years.

Why This Is the Right Framing

I have written about the semantic layer as infrastructure since before most BI vendors were willing to call it that. The argument has always been the same: the semantic layer is not a feature of your BI tool. It is the layer of your data stack that determines whether everything above it — dashboards, self-service analytics, AI agents — returns trustworthy results or confident guesses.

What Omni is saying with this fundraise is that they built their entire product around that bet, and the market is confirming it was the right one.

The timing is striking. Yesterday, Google announced the agentic BI era with Looker, centered on LookML as the semantic grounding layer for AI agents and a native MCP server that exposes that semantic layer to any AI tool that speaks the protocol. Today, the Omni Series C closes with a CEO post that explicitly identifies the semantic layer as the company's architectural moat and the reason their AI works where others fail. Two major announcements in 48 hours, both making the same underlying argument.

The semantic layer is not a category anymore. It is the foundation of AI analytics. The market is saying so with capital, with product strategy, and with enterprise adoption.

The Part That Resonates Most

The section of Zima's post that I keep returning to is this: "Context compounds. Over time, the system gets smarter because it encodes more institutional knowledge."

This is the part that most AI analytics discussions miss entirely. The conversation is almost always about the model — which LLM, which embeddings, which RAG architecture. The model is the commodity. What is not a commodity is the accumulated business context your organization has built, maintained, and encoded into a governed semantic layer over months and years.

Omni's customers are feeding the system dbt definitions and documentation, Notion and Google Docs, meeting note transcripts. The result is AI that selects the right fields, applies correct logic, and speaks the company's language. That behavior is not a function of the model. It is a function of the semantic layer that grounds the model in your specific business reality.

This is exactly what the Intelligence Allocation Stack predicts. Layer 1 is the data foundation. Layer 2 is the semantic layer. Layer 3 is orchestration. Layer 4 is AI. Organizations that build layer 2 before layer 4 get AI that compounds in value over time. Organizations that skip straight to layer 4 get AI that is impressive in demos and unreliable in production.

Omni bet the company on building layer 2 first. A $1.5 billion valuation is the market's answer on whether that was the right sequence.

The MCP Connection

One line in the Series C post that deserves more attention than it is getting: Omni's AI answers do not stop at the edges of their app. Customers embed them into products and integrate them into tools like Claude, ChatGPT, and Cursor — via APIs and via Omni's MCP server.

We covered the Omni MCP server when it launched earlier this year. The argument then was that MCP is plumbing and the semantic layer is the brain. Omni's MCP server matters precisely because it exposes governed semantic definitions to any AI agent that speaks the protocol. The business logic does not have to be rebuilt for every new AI tool. It travels with the semantic layer, available to any consumer that connects to it.

That is the moat Zima is describing in technical terms. Not the interface. Not the model. The structured context layer that every interface and every model can query, with the same definitions enforced every time.

What This Means for the Market

The Omni Series C, on the same day as Google's agentic BI announcement with Looker, is a useful moment to step back and look at what the enterprise analytics market is converging on.

Three years ago, the market bet was on visualization. Which tool had the best charts. Which dashboard builder was the most intuitive. Which platform had the most connectors. Those were the differentiators that drove purchasing decisions.

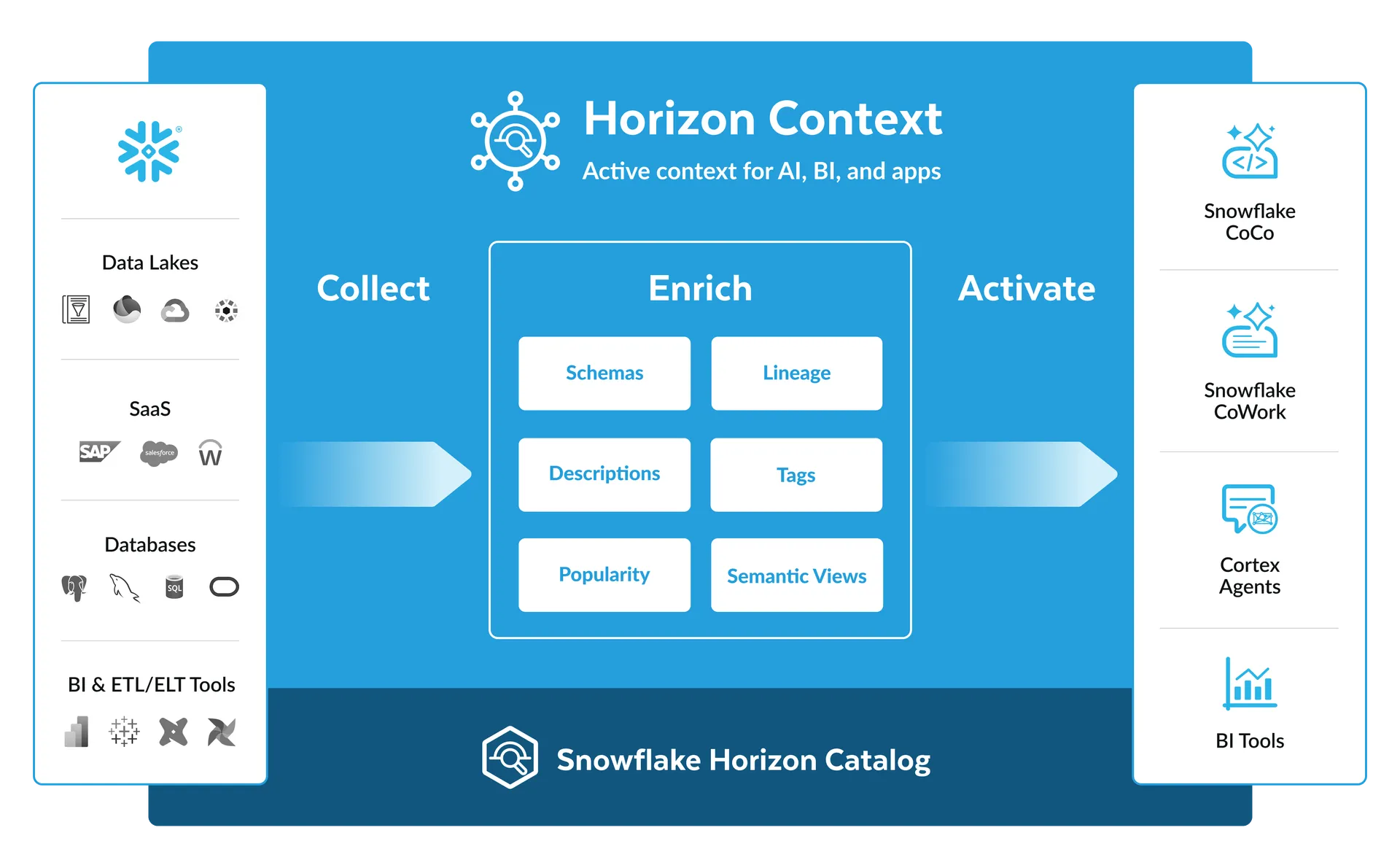

That era is over. The purchasing decision now is not about the interface. It is about the semantic layer underneath the interface. Omni has it natively. Looker has LookML. Snowflake has Semantic Views. Databricks has Unity Catalog Metric Views. Every major platform is racing to establish the governed definition layer as their moat, because the market — and every serious AI implementation — has arrived at the same architectural conclusion: the semantic layer is what makes AI analytics reliable.

The organizations that built this layer two or three years ago — when it was still considered optional infrastructure and not a competitive requirement — are the ones whose AI deployments are working in production right now. The organizations that are building it today, whether through Omni or dbt or Snowflake or any other path, are making the same bet, just later. The organizations that are still skipping it in favor of faster AI demos will have a harder conversation with their leadership teams in 12 months.

For every dollar you spend on AI, six should go to the data architecture underneath it. Omni just raised $120 million from some of the most rigorous enterprise investors in the market to build exactly that. The thesis is the same. The validation is now financial.

An Honest Note on Omni

I have written about Omni vs Looker and covered their MCP server and their positioning in the semantic layer BI tools comparison. The consistent observation across all of it is that Omni is the most architecturally coherent of the modern BI tools for organizations that want the governance depth of LookML without the Google Cloud alignment requirement or the maintenance burden that comes with a large, aging LookML codebase.

The Series C validates the market demand. The question for any organization evaluating Omni is the same one that applies to every semantic layer decision: is your data foundation clean enough to build on? A governed semantic layer on top of inconsistent, ungoverned source data produces consistent, governed transformations of inconsistent data. The tool does not fix the foundation. It amplifies whatever is underneath it.

If the foundation is sound, Omni is a compelling bet. The semantic layer at the center of the product, compounding context over time, accessible via MCP to any AI agent in your stack — that architecture is exactly what the AI analytics era requires. The $1.5 billion valuation says the enterprise market agrees.

Deep dives on modern data engineering

Semantic layers, modern stacks, and scalable architecture — in your inbox, not in a backlog.

More from Unwind Data

Snowflake Horizon Context: What It Does to the OSI

Snowflake announced Horizon Context at Summit 26: a unified active context layer sitting on Horizon Catalog, serving AI agents, BI tools, and the Cortex stack from one place. Here is what it actually is, and what it does to the OSI question.

Gartner Semantic Layer Warning: AI Agents Will Fail Without Context

Gartner formally warned that skipping semantic foundations will cause AI agents to hallucinate, waste budget, and create governance risk. Practitioners already knew this. Here's what the context layer is and what building it actually requires.

How to Connect Your Semantic Layer to AI Agents: Architecture Guide

AI agents connected directly to the warehouse break in production. Here is the vendor-neutral architecture guide for connecting your semantic layer to AI agents using MCP, OSI, and A2A.

Ready to unlock your data potential?

Let's talk about how we can transform your data into actionable insights.

Get in touch