90% of Companies Are Weakening AI Agent Governance to Ship Faster. Here's Why That Backfires.

90% of organizations pressure security teams to loosen AI controls. Meanwhile, companies with AI governance ship 12x more projects to production. The fix isn't speed. It's data architecture.

90% of Organizations Are Telling Their Security Teams to Get Out of the Way

That number comes from a March 2026 analysis that should alarm every data leader in the room. Nine out of ten organizations are actively pressuring their security teams to loosen identity controls so AI agents can ship faster.

Read that again. Companies aren't failing at AI agent data governance because they don't know it matters. They're deliberately weakening it because it slows things down.

Meanwhile, 63% of organizations cannot enforce purpose limitations on their AI agents. And Gartner predicts that more than 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, or inadequate risk controls.

The pattern is familiar. Companies are allocating intelligence to the wrong layer. Again.

The Agentic AI Boom Is Real. The Data Governance Isn't.

Let's be clear about what's happening. 2026 is the year AI agents went from demos to production. 72% of large enterprises are now operating agent systems beyond pilot programs. Gartner projects that 40% of enterprise applications will embed AI agents by the end of this year.

The technology works. The infrastructure underneath it doesn't.

Databricks' 2026 State of AI Agents report found that companies using AI governance tools get 12 times more AI projects into production. Not 12% more. Twelve times. The organizations that invested in AI agent data governance, data quality, and governed data architectures aren't just more compliant. They're shipping more AI, faster, with better results.

But most companies didn't build that foundation. So when the pressure to deploy AI agents intensified, they took the shortcut: tell security to lower the bar.

Why Loosening Controls Is the Worst Possible Strategy

AI agents aren't chatbots. They don't just answer questions. They take actions. They access systems, modify data, trigger workflows, make decisions. An AI agent with loosened identity controls isn't just a risk to data governance. It's a risk to the entire business.

When 63% of organizations can't enforce purpose limitations, it means their AI agents can access data they weren't designed to use, trigger processes outside their scope, and make decisions based on information they shouldn't have. That's not innovation. That's a liability.

And the consequences are already showing up. The Gartner prediction that 40% of agentic AI projects will be canceled isn't about technology failure. It's about governance failure. Escalating costs happen when ungoverned agents consume resources without oversight. Unclear business value happens when agents operate on inconsistent data. Inadequate risk controls happen when security teams are told to get out of the way.

The companies canceling AI agent projects in 2027 are the same companies loosening security controls in 2026. The cause and effect is direct.

I've Watched This Cycle Three Times Now

In 2018, every company hired Data Scientists. The models never shipped because 80% of their time went to cleaning data. The data infrastructure wasn't there, but companies hired the talent anyway and hoped it would work itself out.

In 2022, companies bought every BI tool on the market. Dashboards showed different numbers depending on who built them. There was no single source of truth, no semantic layer, no governed definitions of what "revenue" or "active user" meant. But companies deployed the tools anyway and hoped it would work itself out.

In 2024, companies deployed generative AI. NVIDIA's State of AI report found 88% of companies using AI, but only 39% seeing measurable impact. The models were fine. The data quality underneath was a mess. But companies deployed anyway and hoped it would work itself out.

Now in 2026, companies are deploying AI agents. And instead of building the AI agent data governance that agents require to function safely, they're pressuring security teams to loosen controls. Same pattern. Same shortcut. Same outcome, but with higher stakes.

The technology changes. The mistake stays the same: skip the data foundation, deploy the shiny thing, deal with the consequences later.

What AI Agents Actually Need to Work

I use a framework called the Intelligence Allocation Stack to diagnose where companies go wrong. It has four layers:

Layer 1: Data Foundation. Data governance, data quality, ingestion pipelines, warehousing, single source of truth.

Layer 2: Semantic Layer. Business logic translated for machines. Metric definitions. Governed vocabulary. This is what turns raw data into something AI agents can reason with.

Layer 3: Orchestration Layer. Data pipelines, CRM syncs, reverse ETL, workflow automation, API integrations, real-time event processing.

Layer 4: AI Layer. AI agents, conversational AI, autonomous systems, predictive models.

AI agents live at Layer 4. But they depend entirely on Layers 1 through 3. An AI agent that can't trust the data it accesses will hallucinate, make wrong decisions, or take actions based on stale information. An AI agent without a semantic layer doesn't know what "revenue" means in your organization. An AI agent without AI agent data governance doesn't know what data it's allowed to access or what it's supposed to do with it.

When you pressure security teams to loosen controls, you're not removing friction from Layer 4. You're removing the guardrails that Layers 1 and 2 should have provided. The friction isn't the problem. The missing data foundation is.

For a deeper look at how this framework applies across industries, see our breakdown of the Intelligence Allocation Stack in practice.

The 12x Advantage Nobody Talks About

Here's what makes this frustrating. The data is crystal clear on what works.

Companies with AI governance tools ship 12 times more AI projects to production. IDC research shows companies with mature data governance see 24% higher revenue from AI initiatives. Only 15% of organizations have mature data governance, according to DATAVERSITY, which means the other 85% are leaving that advantage on the table.

The organizations that built governed data architectures before deploying AI agents aren't slowing down. They're going faster. They're deploying more agents, with better results, because their agents operate on trusted data with clear boundaries.

For every dollar companies spend on AI, they should be spending six on the data architecture underneath it. The companies pressuring security to lower the bar are spending the dollar without the six. And they're wondering why 40% of the projects get canceled.

Key Stats: Governance vs. No Governance

- 12x more AI projects reach production at companies with mature AI governance tools

- 24% higher revenue from AI initiatives at organizations with governed data architectures

- 40% of ungoverned agentic AI projects are predicted to be canceled by 2027

- 15% of organizations currently have mature data governance — leaving the advantage to the few

What the 90% Should Do Instead

If you're in one of the organizations pressuring security to loosen AI controls, the instinct is understandable. The market pressure to deploy AI agents is real. Your competitors are shipping. Boards want results.

But the shortcut doesn't work. It never has. Here's what does.

Build purpose-specific data access layers. Instead of loosening general identity controls, create governed data endpoints that give AI agents exactly the data they need and nothing more. This is faster to deploy than it sounds, and it eliminates the need to compromise security.

Invest in a semantic layer. AI agents need to understand your business logic, not just your raw data. Governed metric definitions, clear vocabulary, and consistent business rules are what make the difference between an agent that helps and an agent that hallucinates. Tools like dbt Semantic Layer, Looker's LookML, or Omni make this achievable without rebuilding everything.

Implement data quality monitoring before agent deployment. 62% of organizations report incomplete data. 58% cite capture inconsistencies. If your data has these problems, your AI agents will inherit them. Automated data quality checks should be a deployment prerequisite, not a nice-to-have.

Document data lineage for every agent. If you can't trace what data your AI agent accessed and why, you can't audit it, you can't debug it, and you can't prove compliance. This becomes especially critical as the EU AI Act's Article 10 data governance requirements take effect in August 2026.

Treat governance as a deployment accelerator, not a blocker. The 12x production advantage from AI governance tools isn't abstract. It means governed organizations are deploying AI agents faster and more successfully than ungoverned ones. Governance isn't what's slowing you down. The absence of AI agent data governance is what's going to cancel 40% of your projects.

The Regulatory Hammer Is Coming Too

If the business case for governance isn't convincing enough, the regulatory case will be. The EU AI Act's high-risk provisions take effect on August 2, 2026. Article 10 requires that AI systems operating in high-risk domains have governed, documented, representative, and bias-tested data. Fines reach up to 35 million euros or 7% of global annual turnover.

Organizations that loosened security controls to ship AI agents faster are about to face regulators who want to see documentation, data lineage, and governance practices for those same systems. The shortcut that saved a quarter in 2026 could cost tens of millions in 2027.

Finland already has full enforcement powers active since January 1, 2026. Other EU member states are following. The window for fixing data governance before enforcement scales across Europe is measured in months, not years. You can review the current EU AI Act regulatory framework to understand what documentation requirements apply to your agent deployments.

The Real Race

The race to deploy AI agents is real. But it's not won by the company that ships the most agents the fastest. It's won by the company whose agents actually work.

And the agents that work are the ones built on governed data, clear business logic, documented lineage, and purpose-specific access controls. Not on loosened security and crossed fingers.

90% of organizations are telling their security teams to step aside. In 18 months, 40% of those projects will be canceled. The correlation isn't coincidental.

Systems beat individuals at scale. And AI agent data governance beats speed every time.

Start at Layer 1. The agents can wait.

More data strategy insights like this

Join data and operations leaders getting Unwind's biweekly roundup on turning data into decisions.

More from Unwind Data

BI Migration Approach: What Actually Works and What Breaks

Most BI migrations fail because teams migrate dashboards instead of fixing the logic underneath them. Here is a honest account of what works, what breaks, and why the semantic layer is where every migration should start.

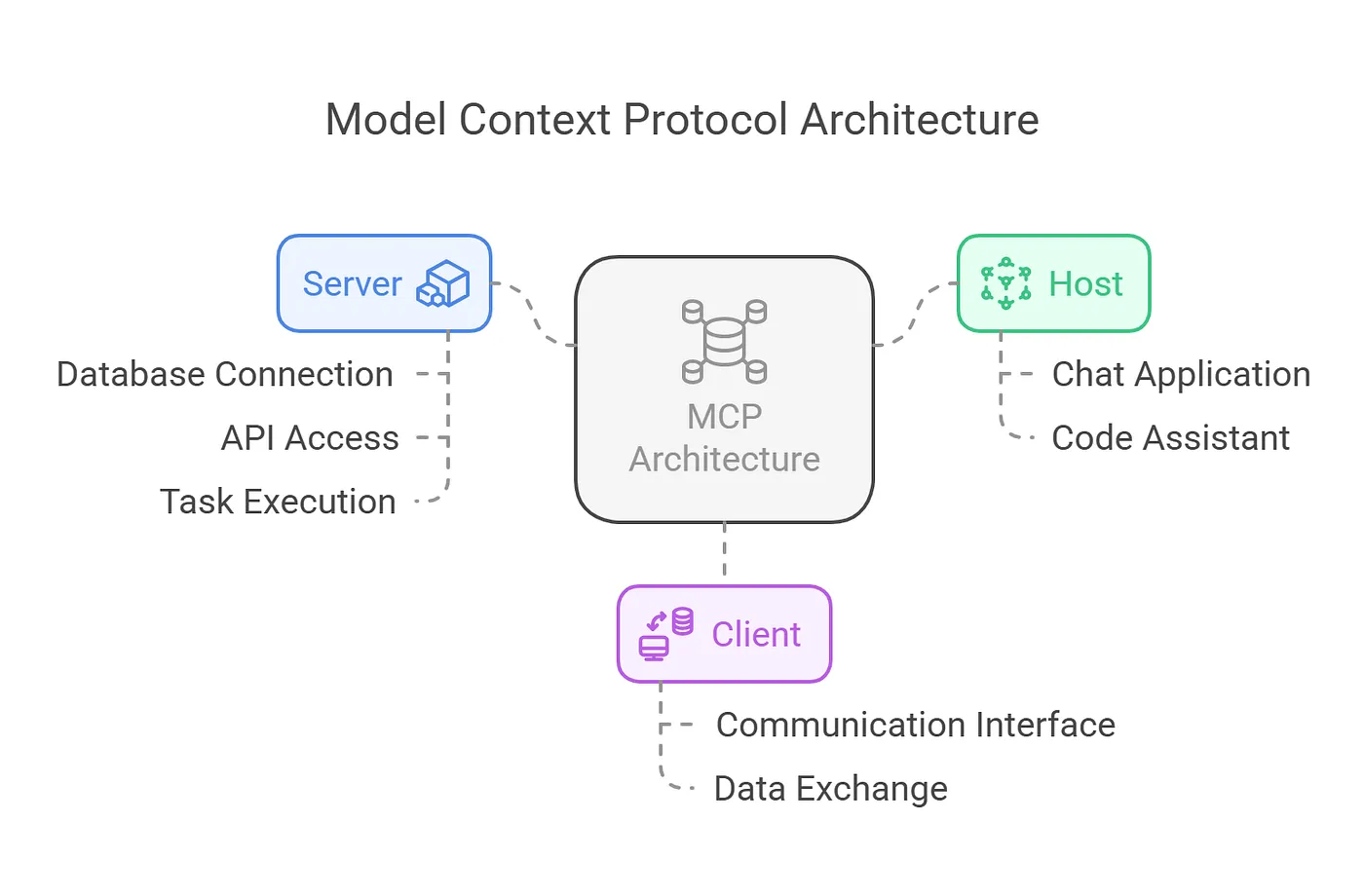

Why MCP Without a Semantic Layer Will Fail

Gartner warns 60% of agentic analytics projects relying solely on MCP will fail by 2028 without a semantic layer. Here is why MCP needs OSI and governed semantic definitions to deliver trustworthy AI.

The EU AI Act Hits in August. 93% of Companies Aren't Ready.

The EU AI Act enforces in August 2026. Article 10 demands data governance that 93% of enterprises don't have. The fix isn't more AI. It's better data architecture.

Ready to unlock your data potential?

Let's talk about how we can transform your data into actionable insights.

Get in touch