Gartner just issued a warning that should stop every data team mid-sprint. By 2028, 60% of agentic analytics projects relying solely on the Model Context Protocol will fail due to the absence of a consistent semantic layer. That prediction, delivered by Andres Garcia-Rodeja at the Gartner Data and Analytics Summit in March 2026, cuts straight through the current MCP hype cycle.

MCP is everywhere right now. Anthropic launched it. Every data tool is shipping integrations. The promise is simple: a universal protocol for connecting AI agents to data sources. But connectivity is not context. And Gartner is saying that the gap between the two will kill more than half of agentic analytics projects within two years.

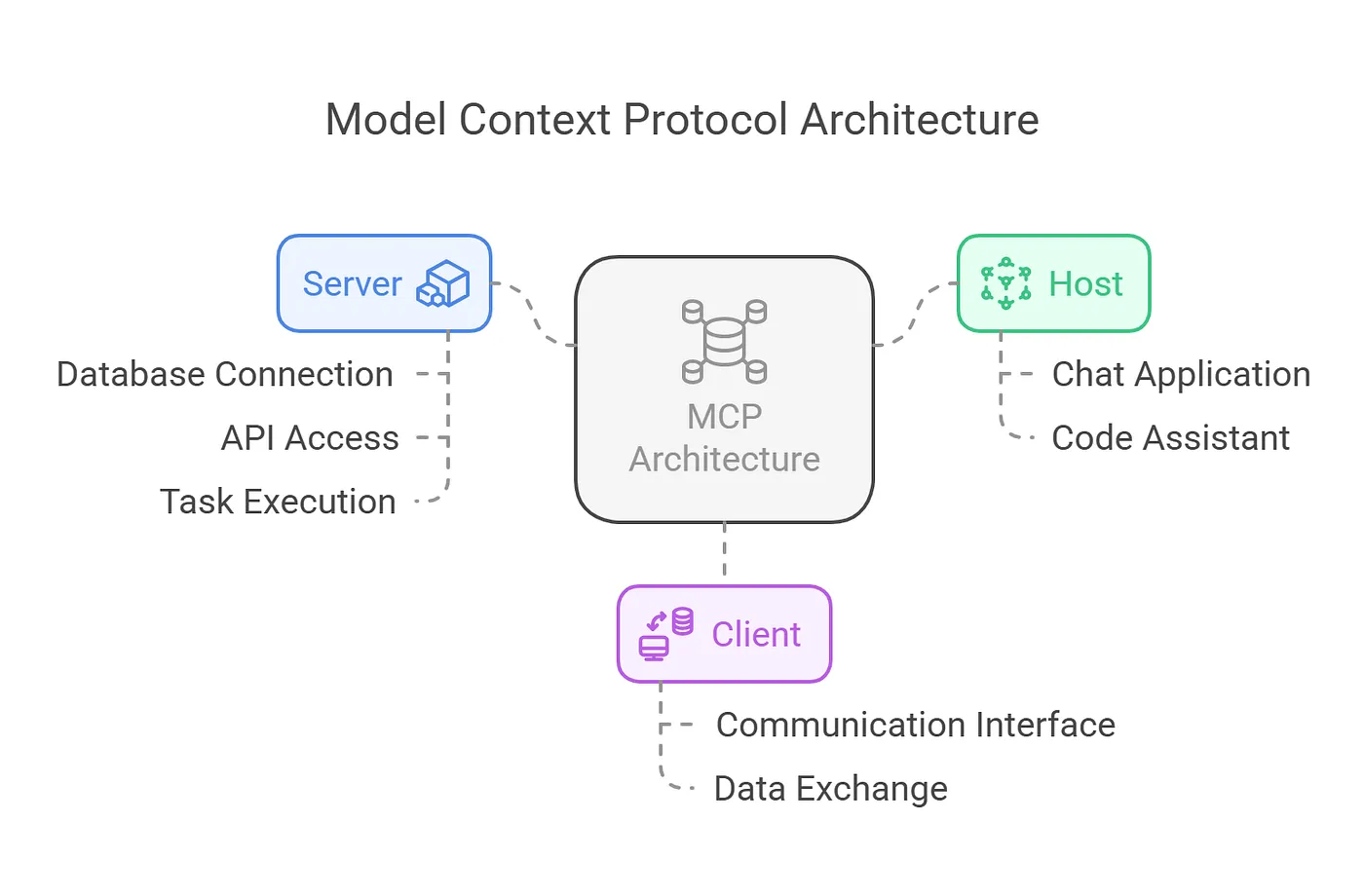

What MCP actually does

The Model Context Protocol is a standard for connecting AI agents to external data systems. Think of it as a USB port for AI. Any agent that speaks MCP can plug into any data source that exposes an MCP server. No custom integrations. No bespoke API wrappers. One protocol, universal access.

This solves a real problem. Before MCP, every AI agent integration was custom-built. Connecting Claude to your Snowflake warehouse required different code than connecting it to your PostgreSQL database. MCP standardizes that plumbing.

But here is what MCP does not do. It does not tell the agent what the data means. It does not explain that "revenue" in your finance schema uses accrual accounting while "revenue" in your sales schema counts bookings at signature. It does not know that "active customer" means something different to the product team than to the retention team. MCP delivers rows and columns. It does not deliver business logic.

The semantic layer gap

This is the gap Gartner is warning about. MCP gives AI agents access to your data. The MCP semantic layer gives them understanding of your data. Without understanding, access is dangerous.

Consider what happens when an AI agent with MCP access queries your data warehouse. The agent receives table schemas, column names, and raw data. It can write syntactically correct SQL. But it has no way to know which tables contain the canonical version of a metric, how dimensions should be joined, or which business rules apply to specific calculations.

The agent guesses. It picks the first table that looks relevant. It joins on whatever key seems to match. It returns an answer that is technically plausible and semantically wrong. And because the answer came from an AI agent querying the actual data warehouse, the person receiving it has no reason to doubt it.

This is not a theoretical risk. 28% of organizations already report limited or no confidence in the quality of data feeding their AI systems. That number will grow as agents get broader access without deeper context. MCP without a semantic layer is like giving someone the keys to the library but removing all the labels from the shelves.

What a MCP semantic layer adds to MCP

A semantic layer sits between the raw data and anything that consumes it. It defines business logic in a machine-readable format. Metrics, dimensions, relationships, access controls, and data quality rules. When an AI agent queries through a semantic layer instead of directly through MCP, it does not need to guess.

Metric definitions become deterministic. Instead of the agent interpreting column names to calculate revenue, the semantic layer provides the exact formula. SUM(amount) WHERE status = 'completed' AND refunded = false. Every query, every time, from every tool.

Joins are declared, not inferred. The semantic layer defines how tables relate. Customer connects to Order through customer_id. Order connects to Product through line items. The agent follows declared relationships instead of guessing based on column name similarity.

Access controls travel with the logic. The semantic layer can enforce that an agent operating on behalf of a marketing analyst sees different data than an agent operating on behalf of a finance director. MCP alone has no concept of row-level security or role-based access at the semantic level.

Data quality is built in. The semantic layer can flag when a metric's underlying data fails freshness or completeness checks. The agent knows not to serve stale numbers. Without this, MCP happily returns data from a pipeline that broke three days ago.

OSI: the standard that makes this portable

The challenge with semantic layers has always been fragmentation. Looker had LookML. Power BI had its tabular model. dbt has MetricFlow. Each tool defined business logic, but only for its own consumption.

The Open Semantic Interchange specification changes this. OSI is a vendor-neutral, open-source standard released as v1.0 in January 2026 under an Apache 2 license. It defines a universal format for semantic model constructs: datasets, metrics, dimensions, relationships, and context. Any tool that reads OSI can interpret the same business definitions.

The partner list tells the story. Snowflake, dbt Labs, Salesforce, Databricks, ThoughtSpot, Omni, Alation, Atlan, Collibra, Sigma, BlackRock, JPMC, Denodo, Mistral AI, and over 30 others. When every major data platform commits to the same semantic specification, it stops being a feature and starts being infrastructure.

OSI is the layer that makes MCP trustworthy. MCP provides the connectivity. OSI provides the context. Together, they give AI agents both access and understanding. Learn more about OSI adoption in our overview of the Open Semantic Interchange standard.

The Snowflake approach: Semantic View Autopilot

Snowflake is already building this combination into their platform. Semantic View Autopilot, announced at BUILD London and now generally available, uses AI to automatically create and maintain governed semantic views. These views embed business logic, metric definitions, and access controls directly into the data platform.

The result is that AI agents querying through Snowflake's Cortex or external tools can consult the semantic view before writing SQL. The agent knows what "revenue" means. It knows which joins are valid. It knows which users can see which data. And because the semantic views are OSI-compatible, those definitions are portable to any other tool in the stack.

Early adopters including eSentire, HiBob, Simon AI, and VTS report cutting semantic model creation from days to minutes. That speed matters because the biggest barrier to semantic layer adoption has never been technology. It has been the effort required to create and maintain the definitions. Autopilot removes that barrier.

The architecture that works

Gartner's recommendation at the Summit was clear. Success requires complementing MCP with knowledge graphs, ontologies, and governed semantic layers that give agents coherent, reliable context for multi-step analytics. The architecture that works has three components.

MCP for connectivity. The protocol handles the plumbing. Agents connect to data sources through a standard interface. This layer is essentially solved.

OSI-compatible semantic layer for context. Business logic, metric definitions, relationships, and governance rules are defined once in OSI format. dbt's MetricFlow, Snowflake's Semantic Views, or any OSI-compatible tool can serve as the authoring environment. The definitions are portable and machine-readable.

Data governance for trust. Access controls, data quality monitoring, lineage tracking, and audit trails ensure that agents operate within guardrails. Gartner predicts that by 2030, 50% of organizations will use autonomous AI agents to interpret governance policies into machine-verifiable data contracts. Read more about data governance for AI agents on our blog.

Skip any one of these layers and the system breaks. MCP without semantics produces wrong answers. Semantics without governance produces unauthorized answers. Governance without connectivity produces no answers at all.

What to do this quarter

If you are building agentic analytics capabilities right now, here is how to avoid being part of Gartner's 60%.

Do not ship MCP integrations without a semantic layer underneath. Every MCP connection to a data source should route through governed semantic definitions. If the agent can bypass the semantic layer and query raw tables directly, it will. And it will get things wrong.

Start with your highest-impact metrics. You do not need to define every metric on day one. Pick the 20 metrics that your leadership team uses to make decisions. Define them formally in dbt MetricFlow or your semantic tool of choice. Export them to OSI format. That covers 80% of what agents will be asked about.

Evaluate Semantic View Autopilot if you are on Snowflake. The ability to auto-generate semantic views from existing table structures dramatically reduces the time to value. Manual semantic modeling is what stopped most teams in the past. Automation changes the economics.

Budget for semantics as infrastructure. Gartner put the semantic layer on the same level as cybersecurity as critical infrastructure by 2030. That means it needs a line item, a team, and ongoing maintenance. It is not a one-time project.

For every dollar spent on AI, six should go to data architecture. The MCP semantic layer is where those six dollars have the most immediate impact on AI agent performance. MCP opened the door. The semantic layer decides whether what walks through it can be trusted.

More data strategy insights like this

Join data and operations leaders getting Unwind's biweekly roundup on turning data into decisions.

More from Unwind Data

BI Migration Approach: What Actually Works and What Breaks

Most BI migrations fail because teams migrate dashboards instead of fixing the logic underneath them. Here is a honest account of what works, what breaks, and why the semantic layer is where every migration should start.

90% of Companies Are Weakening AI Agent Governance to Ship Faster. Here's Why That Backfires.

90% of organizations pressure security teams to loosen AI controls. Meanwhile, companies with AI governance ship 12x more projects to production. The fix isn't speed. It's data architecture.

The EU AI Act Hits in August. 93% of Companies Aren't Ready.

The EU AI Act enforces in August 2026. Article 10 demands data governance that 93% of enterprises don't have. The fix isn't more AI. It's better data architecture.

Ready to unlock your data potential?

Let's talk about how we can transform your data into actionable insights.

Get in touch