131 Days. That's How Long You Have.

On August 2, 2026, the EU AI Act's high-risk provisions become enforceable. Every company operating high-risk AI systems in Europe will need to prove compliance with Articles 8 through 15. That includes conformity assessments, technical documentation, risk management frameworks, and human oversight protocols.

But here's the number that should keep data leaders up at night: Article 10.

Article 10 is titled "Data and Data Governance." It requires that training, validation, and testing datasets are relevant, sufficiently representative, free of errors, and complete. It mandates documented EU AI Act data governance practices covering design choices, data collection, preparation, and bias detection. It demands that datasets reflect the specific geographical, contextual, and behavioral characteristics of the environment where the AI system operates.

In plain English: regulators are about to audit your data foundation. And according to a March 2026 report from Cloudera and Harvard Business Review Analytic Services, only 7% of enterprises say their data is completely ready for AI.

93% are not.

The Readiness Gap Nobody Wants to Talk About

The Cloudera/HBR findings aren't an outlier. They're the latest data point in a pattern that's been building for years.

Deloitte's State of AI in the Enterprise 2026 report shows that AI governance readiness sits at just 30%. Data management readiness is at 40%. Talent readiness is at 20%. Meanwhile, 71% of companies are actively using or piloting AI across customer service, IT, HR, and finance. Only 30% feel fully prepared to operationalize these tools end to end.

DATAVERSITY research tells the same story from a different angle. Only 15% of organizations have mature data governance. 62% report incomplete data. 58% cite capture inconsistencies as a primary challenge.

These aren't AI problems. They're data infrastructure problems. And the EU AI Act is about to make them legal problems. For a deeper look at how data infrastructure underpins AI readiness, see our piece on building an AI-ready data architecture.

What Article 10 Actually Demands

Most coverage of the EU AI Act focuses on the flashy stuff. Prohibited practices. Deepfake disclosure. Foundation model obligations. But for companies deploying high-risk AI systems in areas like HR, credit scoring, insurance, or critical infrastructure, Article 10 is where the real EU AI Act data governance work lives.

Here's what it requires:

Data governance and management practices that address design choices, data collection processes, data preparation operations (annotation, labeling, cleaning, enrichment), formulation of assumptions, and prior assessment of data availability, quantity, and suitability.

Training, validation, and testing datasets that are relevant, sufficiently representative, and to the best extent possible free of errors and complete in view of the intended purpose.

Examination for biases that are likely to affect the health and safety of persons, have a negative impact on fundamental rights, or lead to discrimination. Where biases are detected, appropriate measures to address them.

Statistical properties appropriate to the persons or groups of persons on whom the high-risk AI system is intended to be used, including specific geographical, contextual, behavioral, or functional characteristics.

Read that list again. This isn't about having a model that performs well on a benchmark. This is about proving that the data underneath the model is governed, documented, representative, and clean. It's a legal requirement for what should have been an engineering standard all along.

The Pattern Repeats. Again.

I've seen this before. More than once.

In 2018, every company was hiring Data Scientists. They were going to unlock insights, build predictive models, transform the business. What actually happened? Most of those Data Scientists spent 80% of their time cleaning data. The models never shipped because the data infrastructure wasn't there.

In 2022, it was dashboards and self-serve analytics. Companies bought every BI tool on the market. But the dashboards showed different numbers depending on who built them, because there was no single source of truth. No semantic layer. No governed definitions of what "revenue" or "active user" actually meant.

In 2024, it was generative AI. Every company spun up a chatbot, a copilot, an AI assistant. NVIDIA's State of AI report found that 88% of companies were using AI, but only 39% saw measurable impact. The models were fine. The data underneath them was a mess.

Now it's 2026. The EU AI Act is forcing the same conversation around EU AI Act data governance, but with legal teeth. And the companies that didn't fix their data foundation for the Data Scientists, didn't fix it for the dashboards, and didn't fix it for the AI models are now facing fines of up to 35 million euros or 7% of global annual turnover.

The pattern is always the same: companies allocate intelligence to the wrong layer. They invest in the most visible technology while ignoring the infrastructure that makes it work.

Why This Is Actually a Data Architecture Problem

I use a framework called the Intelligence Allocation Stack to explain where companies go wrong. It has four layers:

Layer 1: Data Foundation. Data governance, data quality, ingestion pipelines, warehousing, single source of truth.

Layer 2: Semantic Layer. Business logic translated for machines. Metric definitions. Governed vocabulary.

Layer 3: Orchestration Layer. Data pipelines, CRM syncs, reverse ETL, workflow automation, API integrations.

Layer 4: AI Layer. AI agents, conversational AI, autonomous systems, predictive models.

Most companies start at Layer 4 and work backwards. They deploy the AI agent, discover it hallucinates because the underlying data is inconsistent, then scramble to fix governance after the fact.

The EU AI Act's Article 10 is essentially a legal mandate for Layer 1 and Layer 2. It doesn't care about your model architecture. It cares about whether your training data is governed, documented, representative, and free of bias. That's data infrastructure work. That's EU AI Act data governance work. That's the work companies have been skipping for a decade.

For every dollar companies spend on AI, they should be spending six on the data architecture underneath it. The EU just made that ratio a compliance requirement. You can explore how to structure that investment in our guide on the Intelligence Allocation Stack.

Finland Is Already Enforcing. The Rest Are Coming.

On January 1, 2026, Finland became the first EU member state with fully operational AI Act enforcement powers. The Finnish Transport and Communications Agency (Traficom) and the Office of the Data Protection Ombudsman now have the authority to conduct inspections, request documentation, and issue orders to halt non-compliant AI systems.

Other national competent authorities across the EU are activating throughout the first half of 2026. By the time August 2 arrives, enforcement infrastructure will be in place across member states.

And the penalties aren't theoretical. Up to 35 million euros or 7% of global annual turnover for prohibited practices. Up to 15 million euros or 3% for non-compliance with high-risk obligations. Up to 7.5 million euros or 1% for supplying incorrect information.

For context, GDPR's maximum fine is 4% of global turnover. The EU AI Act goes to 7%. They're not playing around.

What the 93% Need to Do Before August

If your data isn't AI-ready today, you're not going to build a perfect data foundation in 131 days. But you can take the steps that matter most for EU AI Act data governance compliance and long-term AI readiness.

Inventory your AI systems. Over half of organizations don't even know which AI systems they have in production. You can't assess compliance with systems you haven't catalogued. Start here.

Classify risk levels. Not every AI system falls under high-risk. But the ones that do — HR tools, credit scoring, insurance underwriting, critical infrastructure — need Article 10 compliance. Know which ones are in scope.

Audit your data lineage. Article 10 requires documented data governance practices covering collection, preparation, and bias detection. If you can't trace where your training data came from and how it was processed, that's your biggest gap.

Establish a semantic layer. Governed metric definitions and business logic ensure that the data feeding your AI systems means the same thing everywhere. This is Layer 2 of the Intelligence Allocation Stack, and it's what turns raw data into AI-ready data.

Test for bias systematically. Article 10 requires examination for biases that affect health, safety, fundamental rights, or lead to discrimination. This isn't optional. Build it into your data pipeline, not your compliance checklist.

Document everything. The EU AI Act requires technical documentation that records design decisions, data lineage, and testing methodologies. Companies practicing agile development will struggle to create this retrospectively. Start documenting now.

The Silver Lining

Here's what I've learned from working with companies across fintech, e-commerce, SaaS, and sustainability: regulatory pressure often forces the right architectural decisions.

I co-founded a company focused on CSRD and ESG reporting. The pattern was identical. Companies didn't invest in sustainability data infrastructure until regulation demanded it. But the ones that did it well didn't just achieve compliance. They built data assets that made every downstream process better.

The EU AI Act will do the same thing for AI data governance. The companies that treat Article 10 as a checkbox will build the minimum. The companies that treat it as a catalyst will build the data foundation that makes their AI actually work. Learn how to make that shift in our overview of a practical data governance strategy.

IDC research shows that companies with mature data governance see 24% higher revenue from AI initiatives. That's not a compliance benefit. That's a competitive advantage.

The 93% who aren't ready have a choice. They can panic about the deadline, or they can use it as the forcing function to finally fix the floor before they let the agents run.

131 days. Start at Layer 1.

More data strategy insights like this

Join data and operations leaders getting Unwind's biweekly roundup on turning data into decisions.

More from Unwind Data

BI Migration Approach: What Actually Works and What Breaks

Most BI migrations fail because teams migrate dashboards instead of fixing the logic underneath them. Here is a honest account of what works, what breaks, and why the semantic layer is where every migration should start.

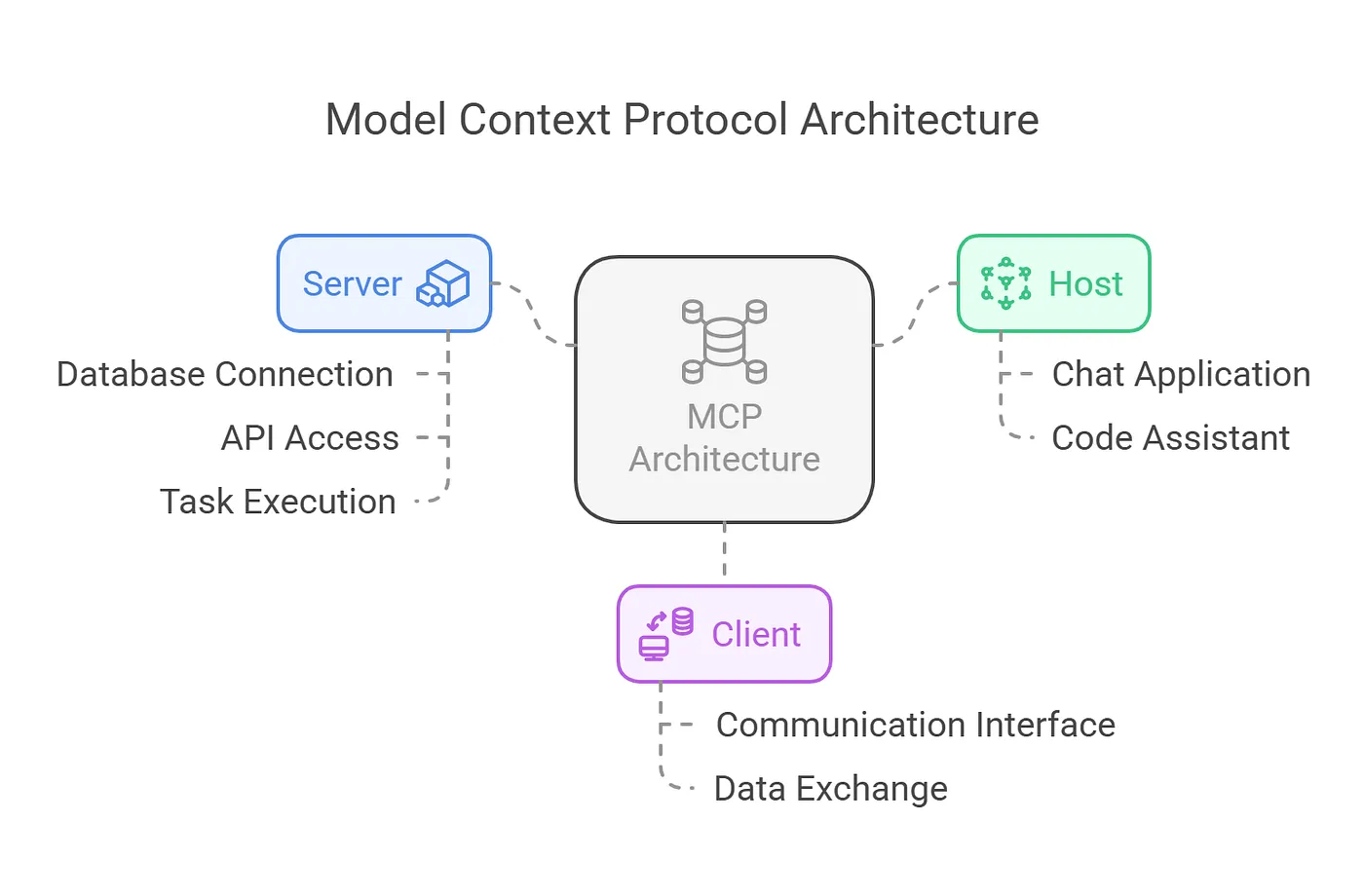

Why MCP Without a Semantic Layer Will Fail

Gartner warns 60% of agentic analytics projects relying solely on MCP will fail by 2028 without a semantic layer. Here is why MCP needs OSI and governed semantic definitions to deliver trustworthy AI.

90% of Companies Are Weakening AI Agent Governance to Ship Faster. Here's Why That Backfires.

90% of organizations pressure security teams to loosen AI controls. Meanwhile, companies with AI governance ship 12x more projects to production. The fix isn't speed. It's data architecture.

Ready to unlock your data potential?

Let's talk about how we can transform your data into actionable insights.

Get in touch