The Semantic Layer Just Became an Industry Standard

Snowflake, Microsoft, dbt Labs, Salesforce, and Databricks all converged on the semantic layer as critical AI infrastructure within the same month. The Open Semantic Interchange spec is now the standard.

In the span of two weeks, Snowflake, Microsoft, Salesforce, Databricks, dbt Labs, and Denodo all made the same bet. The semantic layer is no longer a feature. It is infrastructure. And if you are still treating it as optional, you are already behind.

The Open Semantic Interchange specification hit v1.0 in January. By March, the partner list read like a who's who of enterprise data: Snowflake, dbt Labs, Salesforce, Databricks, Atlan, Alation, Qlik, and BlackRock. Then Microsoft announced first-class dbt adapters for Fabric. Sema4.ai launched a semantic layer for AI agents at the Gartner Data and Analytics Summit. And Gartner itself declared that universal semantic layers will be critical infrastructure by 2030.

That is not a trend. That is a semantic layer industry standard being born.

What the Open Semantic Interchange actually is

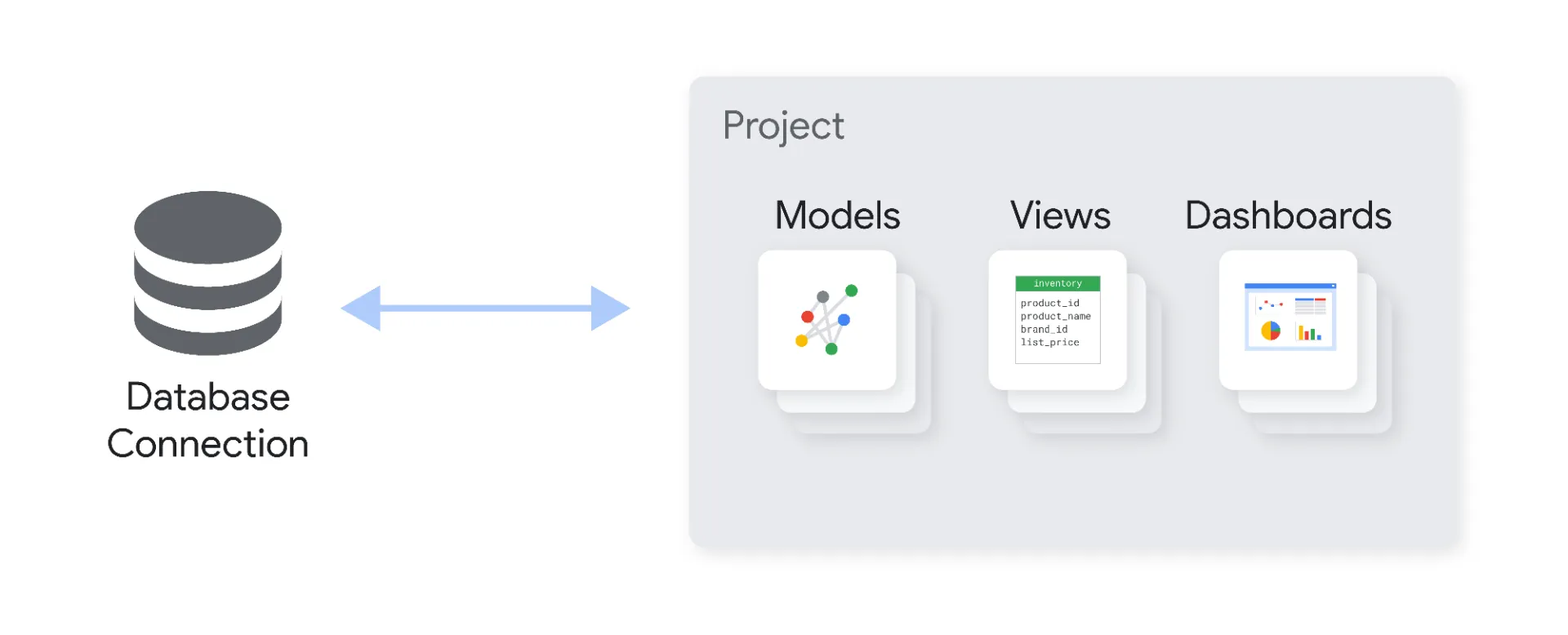

OSI is a vendor-neutral, open-source specification for representing semantic layer constructs. Datasets, metrics, dimensions, relationships, and context. All defined in a format that any tool, platform, or AI agent can interpret consistently.

Think about what this solves. Today, your definition of "monthly recurring revenue" exists in your BI tool. A different version exists in your dbt models. Another lives in someone's spreadsheet. Your AI agent picks whichever one it finds first. OSI creates a single specification that all of these tools can read and write. One definition. Everywhere.

The v1.0 spec was released under an Apache 2 license on January 27, 2026. Phase 2 targets native support in 50+ platforms by end of year. dbt Labs open-sourced MetricFlow as part of their commitment. Snowflake built it into their Universal AI Catalog. Denodo joined on March 24. This is moving fast. You can read the full OSI specification and roadmap on the Open Semantic Interchange GitHub repository.

Or you can read our article on Semantic Interoperability

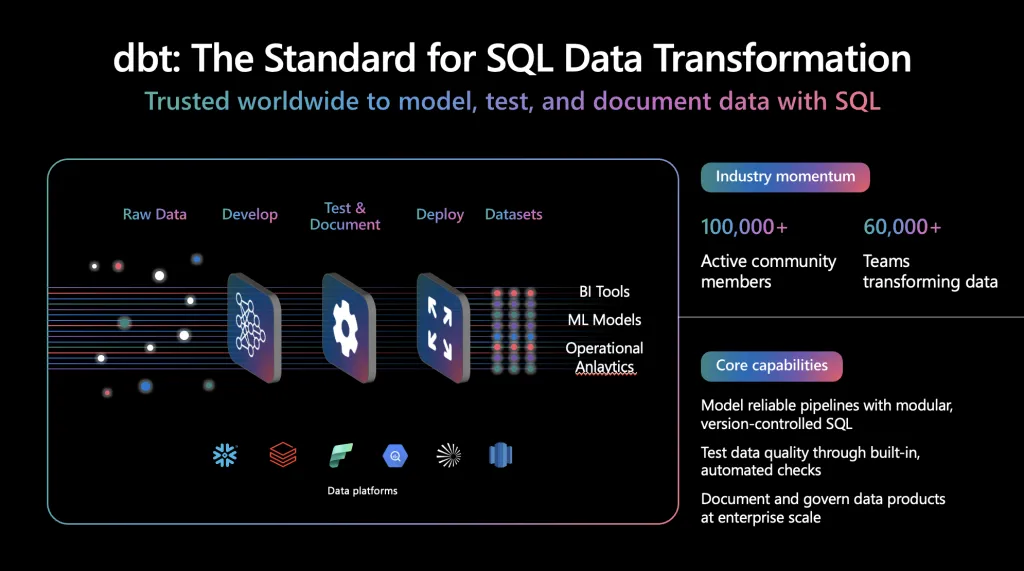

Why Microsoft adopting dbt matters more than Microsoft thinks

Microsoft's announcement about dbt on Fabric is interesting for what it admits, not what it promotes. Their own blog post calls dbt "the standard for analytics engineering." That is Microsoft, the company that spent years pushing Power BI's proprietary semantic model, acknowledging that the open ecosystem won.

The details are real. First-class dbt core adapters for Fabric Warehouse and Lakehouse. dbt Jobs as the operational backbone with GitHub integration, OneLake logging, and pipeline orchestration. dbt Fusion support expected by Q2 2026. This is not a token integration. Microsoft is investing engineering resources because their enterprise customers demanded it.

Here is what this really signals. Data teams do not want to be locked into a single vendor's definition of business logic. They want their semantic layer to be portable. They want their metric definitions to work across warehouses, lakehouses, BI tools, and AI agents without rewriting them for each platform. dbt gives them that. Microsoft finally accepted it.

The same pattern played out with Snowflake and Iceberg. With Databricks and Delta Lake going open. With Salesforce joining OSI. Proprietary formats lose when the ecosystem demands interoperability. The semantic layer follows the exact same path.

The AI agent problem that forced the convergence

MIT Technology Review published a report in March 2026 that frames the issue clearly. AI agent capabilities are set more by the soundness of enterprise data architecture and governance than by the evolution of the models themselves. Only 1 in 10 companies have scaled their AI agents beyond pilot, despite 88% using AI in at least one business function.

The bottleneck is not the model. It is context.

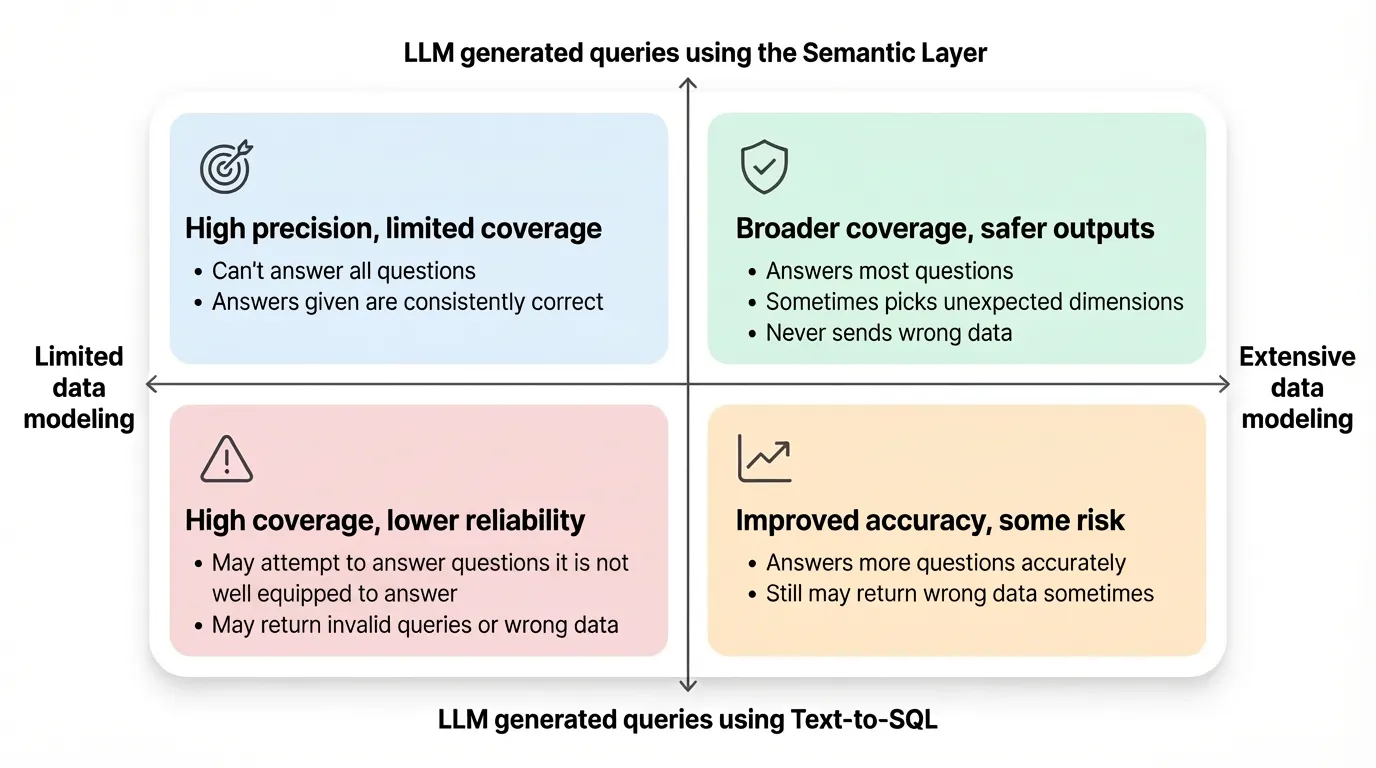

When an AI agent queries your data warehouse, it gets tables and columns. It does not get business logic. It does not know that "revenue" in the finance schema means something different from "revenue" in the marketing schema. It does not know that Q4 numbers need a specific accrual adjustment. Without a semantic layer, the agent is guessing. Confidently guessing, which is worse than not answering at all.

Gartner predicts that by 2030, 50% of organizations will use autonomous AI agents to interpret governance policies into machine-verifiable data contracts. That only works if those agents can read a universal semantic definition. Not a Snowflake-specific one. Not a Power BI-specific one. A universal one. That is exactly what OSI provides — and why establishing a semantic layer industry standard is the most consequential infrastructure decision data teams will make this decade.

Sema4.ai understood this early. Their semantic layer, launched at the Gartner Summit on March 9, lets AI agents analyze databases, documents, and spreadsheets together by providing a unified business context layer. No SQL required from the end user. The agent handles the translation because the semantic layer gives it the vocabulary.

What this means for data teams right now

If you are running dbt today, you are already closer to this standard than most. MetricFlow is open-source and OSI-aligned. Your metric definitions are portable. That is a strategic advantage.

If you are on Snowflake, the Universal AI Catalog with Horizon embeds OSI natively. Your governed semantic definitions travel with the data across Iceberg, Polaris, and connected platforms.

If you are on Fabric, the new dbt adapters mean you can finally use an open transformation layer instead of being locked into Power BI's proprietary approach. This is the single biggest improvement Microsoft has made for data engineering teams on their platform.

If you are on Databricks, Unity Catalog plus their OSI membership means semantic definitions can flow between your lakehouse and external tools.

The pattern across all four: the semantic layer is becoming the interoperability layer. Not compute. Not storage. The business logic layer that sits between your data and every tool that consumes it. If you are evaluating where to start, our semantic layer implementation guide walks through a practical path for each major platform.

The real competitive moat is not your model

Every company has access to the same foundation models. GPT, Claude, Gemini, Llama. The models are commoditizing fast. What is not commoditizing is your business context. Your specific definitions of customer lifetime value, churn risk, revenue recognition, and pipeline velocity. That knowledge lives in your semantic layer.

Companies with mature data governance see 24% higher revenue from AI, according to IDC. That advantage does not come from using a better model. It comes from giving any model the context it needs to deliver accurate, trustworthy answers specific to your business.

For every dollar spent on AI, six should go to data architecture. The industry just validated that ratio. When Snowflake, Microsoft, Salesforce, Databricks, and dbt Labs all invest in the same open specification within the same quarter, the message is clear. The semantic layer industry standard is no longer a feature request. It is the foundation.

What happens next

OSI Phase 2 runs through Q4 2026. The target is native support in 50+ platforms, domain-specific extensions for verticals like finance and healthcare, and pilot programs with early adopters. By 2027, the initiative aims for de facto standard status.

Meanwhile, dbt Fusion is expanding adapter support across Snowflake, Databricks, BigQuery, and now Fabric. The Fusion engine, written in Rust, is significantly faster than dbt Core and natively understands SQL across multiple engine dialects. That speed matters when your semantic layer needs to compile and validate metric definitions across an entire data platform in seconds.

Gartner predicts that through 2027, GenAI and AI agent use will create the first true challenge to mainstream productivity tools in 30 years, prompting a $58 billion market shakeup. The tools that survive that shakeup will be the ones that can read and write a universal semantic specification. The ones that cannot will become legacy.

The debate about whether the semantic layer matters is over. The only question left is whether you are building yours on an open standard or a proprietary one. The industry just made that decision for you.

Deep dives on modern data engineering

Semantic layers, modern stacks, and scalable architecture — in your inbox, not in a backlog.

More from Unwind Data

How to Connect Your Semantic Layer to AI Agents: Architecture Guide

AI agents connected directly to the warehouse break in production. Here is the vendor-neutral architecture guide for connecting your semantic layer to AI agents using MCP, OSI, and A2A.

Looker Alternatives: The Architecture Decision Nobody Talks About

Evaluating Looker alternatives? The real decision is not which tool has better dashboards — it is what happens to your semantic governance layer when you switch. A vendor-neutral framework covering Omni, Lightdash, Sigma, Power BI, Metabase, Cube, and when NOT to leave Looker.

Semantic Layer vs Text to SQL: The Architecture Decision

Text-to-SQL accuracy nearly doubled between 2023 and 2026. The semantic layer still wins on determinism. But the real question isn't which benchmark wins — it's an architecture decision about where your business logic lives.

Ready to unlock your data potential?

Let's talk about how we can transform your data into actionable insights.

Get in touch